Want to support this site? The Command Line for Web Developers is available to purchase on Amazon in print and Leanpub in most digital formats. Sales from the book help maintain and update this site and future editions. Reader support is available on Leanpub’s discussion page.

1. Overview

“From there, I realized that, for me, the difference between Linux and Windows is like driving a stick shift versus driving an automatic.” In The Beginning, There Was The Command Line — Neal Stephenson1

The command line: it has no interface, no guided tour, no help menu—just a prompt. We typically see the command prompt as a backdrop for television shows and movies where some “super-hacker” is busily typing at it like a dark wizard casting spells. This command line trope is so popular as a media prop that one software website has a page dedicated to their numerous cameos in film.2

So, what does the command line have to do with programming for the web? We make websites after all. Web development isn’t exactly a popular Hollywood plot device (okay, maybe Facebook is), and until recently, making websites involved using a set of tools that didn’t use the command line at all.

However, the tools we use for building websites are no longer merely desktop applications. We rely on command line tools more and more for tasks, like processing Sass files into CSS, checking our JavaScript for errors, even testing our websites for optimization opportunities.

Being a web developer means knowing which software to use to meet today’s evolving standards. Having a strong understanding of the software developer ecosystem lets us pick the right tool for the job. No two websites are alike, and the tasks we have to accomplish vary from project to project. We can use command line tools to automate tasks and manage a lot of the heavy lifting that we would otherwise need to do manually.

Don’t worry about being an expert.

Desktop applications like Photoshop and Dreamweaver come with a lot of visual support for performing tasks. As a user of Photoshop, you understand how to navigate the hundreds of functions and their options. Desktop applications almost always have a menu bar and a consistent way to save, open, and create files; they even have an icon for launching the app.

Even though many tools in the command line have standard operating methods, these methods aren’t apparent. Using command tools can vary: they can have dozens of options for doing the seemingly simplest of tasks, and the documentation might as well be written in Greek for new users.

All that said, do not expect to be an expert in using the command line. I know, isn’t that the whole point of this book? It’s practically impossible to know every command and every option of those commands. However, you shouldn’t have to know every command. It is very possible, and not at all difficult, to understand the underlying principles and concepts of the command line without knowing every command. The goal of this book isn’t to turn you into some Unix wizard (there are plenty of hard-core books for that). This book’s purpose is to show you the best of what Command Line Interface (CLI) can do to make your job easier. So when you run into a code example that makes no sense, you will have the ability to break down the syntax into manageable chunks to understand its purpose.

But, first things first:

What is the command line?

Simply put, the command line is the interface that lets you type instructions directly to the computer.

How we get to the command line and the environment that uses the command line will be different depending on your computer and how it’s set up. On a Windows PC, you access it with the “Run” command from your start menu. On a Mac, you access it by launching the Terminal app. Windows computers have multiple options for using CLIs, which we will go over later in the book.

The layer between you and the computer is known as the shell. The shell is what gets information from the computer and returns it to you when you want a list of the files in a directory. By default, today’s Macs come with something called the Bash shell. So when we talk about the command line, Bash, the Terminal app, or even the prompt, we are referring to the same thing—that which we use to type instructions directly to the computer.

Why use the command line?

If you do not use the command line regularly, you might find it odd that a blank screen with a $ on it would be a better tool for making websites than desktop applications. After all, desktop applications are great tools that let you do a lot of what you would expect in building a website. These tools are superior when it comes to creating graphics, but when it comes to actual code, they have a reputation of falling short. Having pixel-point accuracy may be paramount for graphics and animation, but writing actual code is something best done manually.

As web developers, we are accustomed to working directly in code. Bygone are the days when we used WYSIWYG applications.3 These applications have a limited knowledge of HTML. They often have no understanding of backend templating. And with frequent updates to HTML, CSS, and JavaScript, new browsers coming to the market, and existing browser updates, the software used for making websites is almost always out-of-date. These WYSIWYG editors also impede developers from learning code as long as it keeps them in the confines of its graphic user interface. It has become quite clear that any serious developer is better off working in the code itself rather than in these limited desktop applications.

But should all desktop applications be avoided? Of course not. Some applications that have been widely adopted by the development community because of how well they meet the needs of today’s development landscape. But even though some applications may be popular today, that could shift with the changing trends in our industry. So it’s important to qualify which tools work for our needs.

The command line tools we cover in this book have many of the features necessary for web development:

- Flexibility.

- Version-control friendliness.

- Ability to work across teams and environments.

- Ability to work with other tools.

- Fast and quiet usage.

- Quick set-up time.

- Lots of community support.

Flexibility

Each website has its own set of requirements. Some sites comprise themselves entirely of static pages and images, while others run interactive functions, and some are enterprise resource portals. However, each of them needs tools that work with their custom requirements.

Having granular control over your project is crucial. One project may use a combination of technologies, like Sass and Compass with Durandal as a Single Page App framework, while another may be a Node.js web app that uses Express as middleware and Jade for HTML templating. These differing requirements are common, and the tools we use need to be able to meet them.

Version-control friendliness

Version control has dramatically changed how we develop for the web. Git has become the most popular version control software ever. Virtually no web project can happen successfully without some form of version control. Using version control not only allows developers to collaborate on the same code, but it also securely protects code integrity. If your software independently tracks the state of files or has its own format for file types, then that can adversely affect the ability to effectively manage different versions of the same file. Command line tools today are built with Git in mind, and most rely on Git themselves. This reliance allows developers to switch between different versions or to get changes from other developers and lets us continue development without issue.

Ability to work across teams and environments

Similarly, software should be able to work with shared standards without requiring major rewrites. Some software will expect the code to be formatted in a specific way. In fact, merely editing a small portion of a file can cause the entire file to be rewritten.

For example, let’s say you have to fix a bug that causes your client’s home page to not show the page footer. Upon investigation, you realize that there is some malformed HTML, and you rewrite the footer to display correctly. Your coworker gets the latest change and updates the page to complete their task. Later, you are told that the footer needs an update with some new copy. When you look at the page, the footer code looks completely different, as if someone rewrote the whole thing. Not only that, but getting the change caused merge errors with Git. The problem didn’t happen because your coworker redid all your hard work, but because the software only manages files formatted a certain way, and as a result, the edit at one part of the page ended up affecting the entire page.

If you are using a desktop application that has its own proprietary way of tracking files, when you get changes from another developer, the changes will break your app. You may need to reconfigure your application for new files or new versions of existing files; you may even need to upgrade your application if their file format is incompatible. The software you use must be capable of dealing with new code changes and files as part of the process of software development. By using a common set of standards, command line tools can operate similarly across multiple developers and systems.

Ability to work with other tools

As software has become more sophisticated, proprietary file formats have given way to standards (or at the very least, a de facto standard) so data can be shared across applications. For example, a Microsoft Word document can be opened and viewed by many other applications. This compatibility wasn’t always the case. Before, only MS Office apps could read and write their own files. Today’s Adobe Photoshop can work with other applications from the Adobe family much more easily than in years prior. Additionally, other applications can read .psd (PhotoShop) files. The ability to work with the same data across applications has proven to be a valuable trait in choosing which software is best suited for your work.

No single application can manage every task involved in making websites. The tools we use need to be able to refer to each other like a collection of tools that work together. Software like Git and Bash and Ruby can refer to each other to use their unique programming advantages. These relationships provide an ecosystem for software that is effective and open and allows for developers to focus on their work.

Fast and quiet usage

Writing code requires a lot of attention. That attention is usually taken away as soon as we save a file and begin the process of checking our code. Checking the code requires testing, optimizing, and viewing in the browser, among other tasks. So anything that can be automated will cut down on the number of tasks we have to complete, helping make writing code easier.

When we save a file, a number of tasks may execute depending on the context. Sass files trigger a compiler. JavaScript files trigger a linter for code style and errors. Browsers reload our files and update the page. By having these tasks running for you, more of your attention and energy can be spent on coding.

Quick set-up time

Whether you are jumping into an existing project or starting a new website from scratch, you want to get up and coding right away.

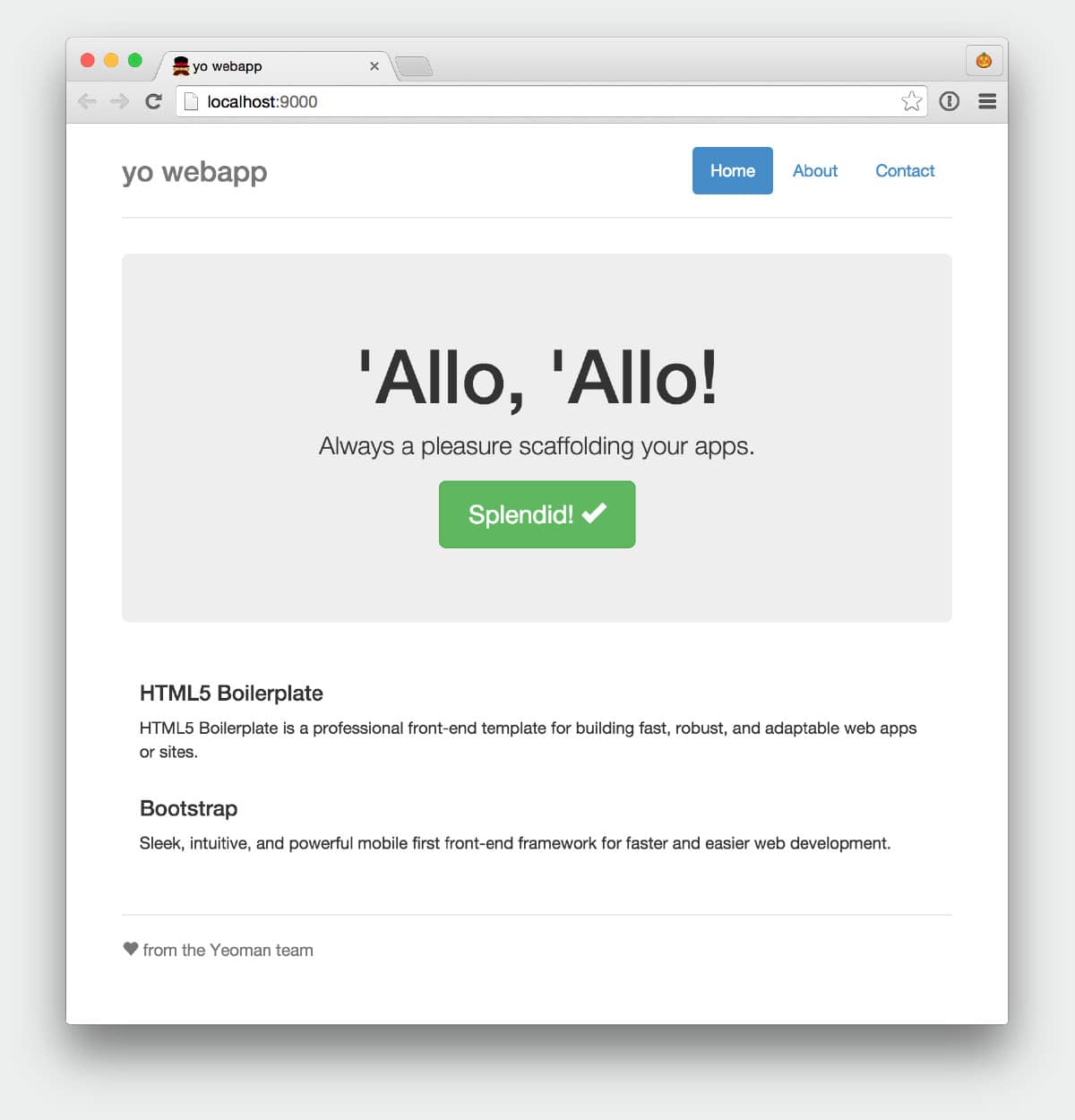

Prebuilt packages like this website generator are not new, but it is a good example of the kind of “out of the box” starter kits that developers are building for the command line. yo is Yeoman, a program that comes with an ever-growing list of scaffolds for quick development. The program includes testing, automatic reloading for the browser, and many other features.

Software shouldn’t require a lot of attention and effort every time you want to begin a new project. It should either have everything you need to begin quickly or be able to provide the means to get it for you through third-party packages, plugins, or templates. Programs like Yeoman exist to give developers the tools they need to write great websites.

Lots of community support

Having a strong user base behind a software package is a good sign that it is worth investing in. With open-source software, the user base is often made up of contributors to its code. Most command line tools are open source and maintained by the people that use them daily. Using open source software offers many advantages: The software is not owned by any single vendor so that all users can contribute to its development. Users also contribute to its ongoing community support, so finding answers to your questions is just a website away.

A brief history of Bash

When we talk about the command line, we are referring to the shell, which is the interface that takes your commands and gives them to the computer to perform. Just as the Windows environment and Finder for Mac use icons for accessing the file system, Bash is a shell that gives you access to the computer. So what is Bash and what is it doing on your machine? More importantly, what does Bash have to do with developing websites?

Bash, which stands for Bourne-Again SHell, has roots going back to the late 1970s with its predecessor, sh (aka Bourne Shell). Over the years, as programs competed with each other for popularity in Unix systems, new shells emerged and forked from each other to a wide variety. By the early 1980s, the increasing variety of programs and shells called for some standardization. The standards groups of Institute of Electrical and Electronic Engineers (IEEE) and International Organization for Standardization (ISO) worked to produce the Unix standard, POSIX (Portable Operating System Interface), in 1988. This standardization let programmers write programs that were compatible across different systems. Today, most of these shells are very similar to each other, and their features do not greatly differ from each other’s. Bash is the most widely used shell, although alternative and competing shells are still popular.

If you were fortunate enough to be a part of the world wide web when it was just starting out, you might have written HTML files on your server that required the use of a terminal. University students used their campus accounts to connect over Telnet to university servers and write HTML pages with the Unix program Pico. Even then, we were using the command line to make websites.

After the sudden rise of popularity in WYSIWYG software and Flash animation, we didn’t see much use of the command line in front-end development until Macs started shipping Bash with OS X in 2001. With Apache Web Server and the OS X “Web Sharing” setting that came with every Mac, developers could work on their sites locally. In 2003, PHP and MySQL let web developers create sites with dynamic content such as shopping carts and blogs. WordPress made building dynamic web pages much easier and incredibly popular.

Today’s development landscape

The expectations for websites today are much different from what we expected back in 2003. Today, our sites have to perform fast across various bandwidths and devices. Sites may be a collection of simple static pages or elaborate web apps. We need to use new strategies for making our code scalable, error-free, and—again—performant. So it stands to reason that our software needs to keep up with us.

One size does not fit all. Not every site can be a WordPress site. Our toolbelt of software has become a large collection of smaller tools that we can piece together to fit the site’s requirements. Take, for example, a static site that on the surface is just a collection of HTML, JS, and CSS. However, your development team is using Sass as a styling language, none of the images have been optimized, and all of the JavaScript is using ES2015, but the site needs to support older browsers. This presents several challenges before coding has even begun.

You could take your JavaScript code and paste it into some Babel web form to get the ES5 equivalent, but you’d have to do that every time you wanted to test your site. Similarly, you could take all images and export them through PhotoShop, but they might not be the final version, and you might not even have PhotoShop installed!

The solution is to take all of these development requirements and move them to automated tasks that you can use over and over.

This collection of tools working together helps us to set up and generate the code and libraries used in building a website and helps us to publish and distribute our code. Generating code usually involves any process that can be automated to save time for the developer. Distributing code includes getting code to other developers on your team and publishing code to servers.

Today’s developer tools

The chances are that any project you work on today uses precompiler syntax, linting, and some sort of testing. Compiling syntax and linting are tasks that you need to run automatically to focus on your code. Other tasks to perform during the development process may include these:

- Compile Sass to CSS.

- Follow an SCSS style guide.

- Test JavaScript for errors.

- Follow a JavaScript style guide.

- Build a sprite sheet from assets.

- Deploy to servers for testing.

- Optimize images for production.

- Remove unused CSS for production.

- Bundle JavaScript files together.

- Minify JavaScript and CSS for production.

These are some, if not all, common tasks that you will likely need to perform. By automating these tasks, not only do we speed up the development process, but we make our tasks more accurate as well. We can include automation in the majority of tasks we use today and replace older and outdated methods with automated tasks that end up being more efficient.

We can manage automation through task runners. Task runners are processes that watch for events to occur and then perform a series of assigned tasks. For example, when saving a Sass file, a task runner like Gulp detects the changes to the file and can then compile that to CSS as well as update the browser to reflect that change.

The Power of Node

Node is one of the most widely used command line programs in web development today. It is a JavaScript runtime engine that can accept HTTP requests; in other words, it’s a web server. That means you can run a JavaScript file through the Node command, and it will run the file just as you would in a browser. Unlike a browser, however, it can access the computer file system. So developers who are already familiar with JavaScript can build programs based on Node to run as command line tools.

This seamlessness has made Node incredibly popular. And Node Package Manager (npm), a Node tool that installs Node programs and their dependencies, made it possible to easily add server functionality and tools for websites. These new tools let us easily spin up local servers, generate frameworks, collaborate across developers, test, and deploy all from within the command line of Bash. Keep in mind that these contributions to our developer landscape weren’t coming from Adobe or Apple—they were coming from other web developers!

Probably the biggest benefit that comes out of using npm and Node is their ability to easily add, update, and remove packages on a granular level. Some packages like Yeoman can be installed on a “global” level that can be used anywhere on your computer, whereas project-specific packages can also be installed as needed per website project.

Yeoman is a collection of tools that works together to build and manage a site and its possible dependencies. These tools are npm, Bower, Gulp, and a Yeoman Generator. The generator is essentially a recipe of specific requirements for what needs to be produced. A standard HTML5 website would expect to have some CSS framework like Bootstrap or Foundation, whereas an Angular generator would need to include the Angular.js library and other related libraries.

Yeoman’s components are npm, Gulp, Bower, and the HTML that’s been generated. Gulp, the task runner, is what we will rely on for the bulk of the heavy lifting during this project. Bower is what we use to add any front-end libraries we want to include. npm is used for any back-end libraries that are required for Gulp to run successfully.

The files Yeoman created include directories for your code (app) and testing (test). The directories node_modules and bower_components are managed by npm and Bower respectively. Git files are included for version control.

$ gulp serve

This command starts a new Node server and opens a new browser window at http://localhost:9000.

Behind the scenes, Gulp is still running. Gulp watches for changes to files and runs the appropriate tasks based on the file that changed. For example, make a change to styles/main.scss and Gulp compiles the Sass file to CSS, adds any necessary vendor prefixes to the CSS file, and finally updates the browser to reflect that change.

Managing front-end and back-end libraries

Bower is very easy to use for adding new front-end libraries to our project. Instead of going to GitHub or a website to download a zip file with the library, we are going to use Bower.

Both Bower and npm have online registries that track popular and supported libraries you can add to your project. Installing a library like jQuery for the front-end or Jade to compile HTML files on the back-end is an easy way make configurations you need for your site.

Later in the, book we cover npm in greater depth. npm can also manage front-end libraries, and we will go over how they differ.

Using Git for version control

Version control is an essential component to building websites. Without a good strategy to safe-guard your assets, you are coding without a net, and mistakes will happen. This applies whether you are building a website on your own or with a team.

Git has become one of the most popular version control software programs today, yet it was written to run entirely from the command line. Desktop applications are available, but they are just wrappers for the command line API.

Git has been around for a while, but only in the past few years has it become a favorite among web developers due to sites like GitHub that manage millions of Git repositories for free.4

Using Git to track changes

Unlike other version control software, Git does not require server software. It can be used offline on your local machine. You can make any number of changes to a file and rely on Git to keep your changes with every commit.

Let’s say you had to make a small change to your code:

<title>My title change.</title>

It’s a small change, but Git can detect that change when we get the status of Git.

In Figure 1-10, we have checked the status of our local repository, and it came back with app/index.html as being modified. Then we commit that change to the repository. The message it gives back from that commit shows that one file changed, which we knew already. However, it also shows one insertion and one deletion. What does this mean?

From the command used in Figure 1-11, instead of showing us two different versions of app/index.html, it showed us the differences of line 5. Git tracks changes in data, not files. This is an important distinction between Git and other control version software. Git is less concerned about the files that have changed than the data in the files. This lets you and other developers work on the same file because we are just tracking the changes to the code.

###Using Git to collaborate with multiple developers

Git provides the means for multiple developers to work together on the same set of files through branching. A branch is a copy of the original code of a project. With a branch, you can make any changes to your code without affecting the original. Once you are at a point where your changes are ready to be a part of the main project, you merge your branch changes to the original.

Git is a great tool for developers to share code, collaborate, get updates, review changes, and even roll back changes if needed.

Software is always changing

One last thing to know about the tools we use: they are going to shift constantly. I can’t think of many other industries that change as rapidly as web development. As we adopt these tools into our repertoire, we tend to push their functionality to the point where we need new tools.

What I understood about npm and Bower when I began writing this book was out-of-date by the time I finished, and I needed to revisit what I wrote. However, the principles behind the technology haven’t changed much at all. They still rely on the command line; they depend on each other to function, and they only get more elaborate and mature the more we as a community foster their growth.

Summary

There is a lot of fear and intimidation for those unfamiliar with the command line. There is no elaborate user interface, no point and click, no drag and drop. The only visual feedback is the Bash prompt. But the truth is that Bash is quite easy to learn. It has a simple learning curve where the only step to begin is to dive right in.

Now that we’ve gone over a couple of examples of what we can do with the command line let’s learn some of the fundamental commands that you will use day-to-day.

- http://www.cryptonomicon.com/beginning.html

- http://nmap.org/movies/

- What You See Is What You Get, a term for drag-and-drop interfaces used in building web pages

- https://github.com/blog/841-those-are-some-big-numbers

Martini Lab A blog by Chris Williams

Martini Lab A blog by Chris Williams